SR&ED for AI/ML Projects: From RAG to LoRA, What Counts and What Does Not

Navigate SR&ED eligibility for AI/ML work including RAG, LoRA fine-tuning, and multi-agent frameworks like CrewAI

SR&ED for AI/ML Projects: From RAG to LoRA, What Counts and What Does Not

Many AI/ML teams wonder if their work on Retrieval-Augmented Generation (RAG), fine-tuning with Low-Rank Adaptation (LoRA), or building orchestration frameworks like CrewAI or AutoGen qualifies for SR&ED. The key question is always the same: Are you solving a technological uncertainty through systematic investigation?

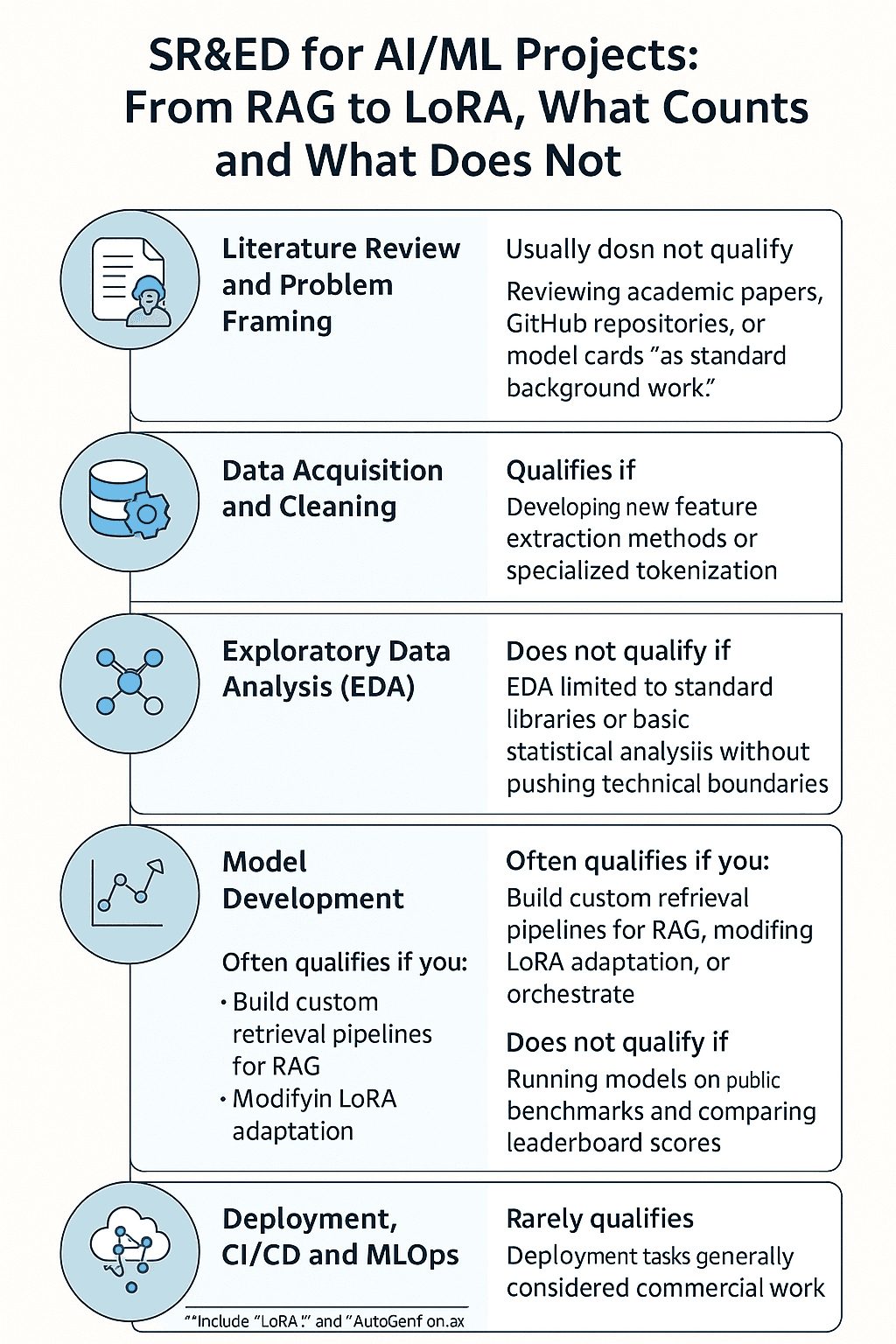

Below, we map a typical ML lifecycle from literature review to CI/CD deployment, with notes on which activities are most likely to qualify.

1. Literature Review and Problem Framing

Usually does not qualify

Reviewing academic papers, GitHub repositories, or model cards (for example, LoRA or AutoGen) is standard background work. It is only relevant if it directly informs a novel experiment addressing a technological uncertainty.

2. Data Acquisition and Cleaning

Qualifies if: You face non-trivial data problems that cannot be solved with standard libraries (for example, text deduplication for multi-lingual RAG or removing hallucinated citations in a retrieval corpus).

Does not qualify if: You use off-the-shelf cleaning scripts or commercial tools without modification.

3. Exploratory Data Analysis (EDA)

Qualifies if: You develop new feature extraction methods, identify non-obvious correlations that require algorithmic changes, or design specialized tokenization for LoRA fine-tuning in domain-specific contexts.

Does not qualify if: EDA is limited to standard libraries like pandas, matplotlib, or basic statistical analysis without pushing technical boundaries.

4. Model Development

Often qualifies if you:

- Build custom retrieval pipelines for RAG that outperform baseline approaches

- Modify LoRA adaptation layers to handle sparse, domain-specific data

- Orchestrate multi-agent frameworks (for example, CrewAI or AutoGen) to achieve capabilities not documented in literature

- Integrate multi-modal inputs in ways that require architectural innovation

5. Model Evaluation and Optimization

Qualifies if: You design non-standard benchmarks, invent new evaluation metrics, or systematically investigate model failures in edge cases (for example, RAG failing for low-resource languages).

Does not qualify if: You only run models on public benchmarks and compare leaderboard scores.

6. Deployment, CI/CD, and MLOps

Rarely qualifies

Deployment tasks like containerization, API integration, or CI/CD pipelines are generally considered commercial work. Exceptions occur when they involve solving a unique technical bottleneck, such as enabling ultra-low-latency streaming for real-time RAG responses.

Quick SR&ED Checklist for AI/ML Work

Ask yourself:

- Uncertainty: Is there a specific technical problem that standard methods cannot solve?

- Novelty: Are you modifying algorithms or architectures in a way that is undocumented?

- Experimentation: Did you run structured tests, analyze results, and iterate?

- Documentation: Can you show experiment logs, code changes, and decision rationale?

- Advancement: Does your work push capability or performance beyond what is publicly available?

Example: Qualifying vs. Non-Qualifying

Qualifies: Creating a LoRA fine-tuning approach that enables a base LLM to run RAG queries in under 50ms for a niche legal dataset without losing accuracy, requiring novel optimization strategies.

Does not qualify: Building a chatbot using an existing LoRA fine-tuned model and a standard RAG pipeline exactly as described in the LangChain documentation.

If you are working on an AI/ML project and want to know which parts of your workflow may qualify for SR&ED, fill out our contact form with details about your case. We can review your work and provide a tailored assessment.